0:00

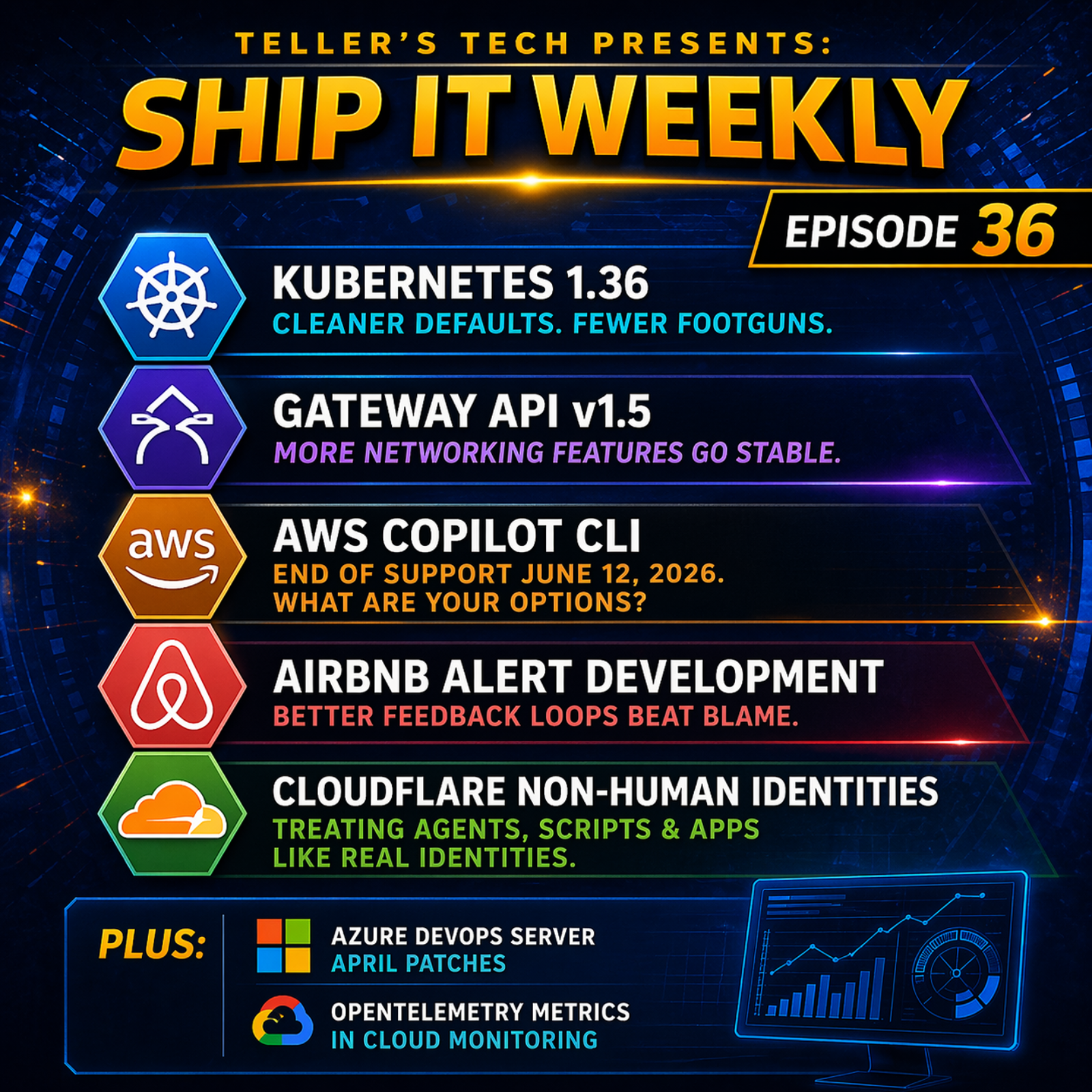

You know that moment when a platform stops sounding

0:02

helpful and starts sounding serious? That's this

0:05

week. Kubernetes is cleaning house. Gateway API

0:08

keeps getting more official. AWS is moving on

0:11

from Copilot. Airbnb is showing why production

0:14

should not be your first alert test. And Cloudflare

0:18

is reminding everybody that a bot with a token

0:21

is still a principal with blast radius. Hey,

0:41

I'm Brian Teller. I work in DevOps and SRE and

0:44

I run Teller's Tech. This is Ship It Weekly,

0:47

where I filter the noise and focus on what actually

0:50

changes how we run infrastructure and own reliability.

0:53

Show notes and links are on shipitweekly .fm.

0:57

If the show's been useful, follow it wherever

0:59

you listen. Ratings help way more than they should.

1:02

We have five main stories today, then the lightning

1:05

round, and we'll wrap with the human closer.

1:07

We're starting with Kubernetes 1 .36 because

1:10

this feels like one of those releases where the

1:13

project keeps sanding off older sharp edges while

1:16

pushing more production grade features into stable

1:19

territory. Then Gateway API version 1 .5, which

1:24

is basically SIG network saying the future is

1:27

not just coming. It is getting promoted into

1:29

the stable path now. After that, AWS Copilot

1:32

CLI end of support because this is a very real

1:36

platform story. One easy path is aging out and

1:40

AWS is pretty clearly nudging people towards

1:42

the next preferred path. Then we've got Airbnb

1:45

on alert development, which is probably my favorite

1:49

SRE story in the set because it is really about

1:52

how better feedback loops beat blaming culture.

1:55

And finally, Cloudflare on non -human identities.

1:59

Because this is one of the clearest examples

2:01

lately of security vendors saying out loud that

2:04

scripts, agents, and third -party tools need

2:08

to be treated like first -class identities, not

2:10

side characters. Story 1. Kubernetes 1 .36 feels

2:19

like a maturity release. Let's start there. Kubernetes

2:22

1 .36 shipped on April 22nd. The release includes

2:27

70 enhancements with 18 graduating to stable,

2:31

25 entering beta, and 25 moving to alpha. That

2:35

alone gives you the usual big release energy,

2:38

but the more interesting part is what it says

2:41

about where the project is spending its maturity

2:44

budget. One of the clearest examples is service

2:46

.spec. external IPs. Kubernetes says that field

2:51

is now deprecated, calls it as a known security

2:54

headache, and points back to the long -running

2:57

man -in -the -middle risk around CVE -2020 -8554.

3:02

You'll see warnings now, and full removal is

3:05

planned for version 1 .43. Kubernetes is also

3:09

permanently disabling the old Git repo volume

3:12

plugin in 1 .36, saying that path is closed for

3:17

good and existing workloads need to move to alternates

3:21

like init containers or external Git sync style

3:24

tools. That's why I like this story, because

3:27

this is not just look at all of the new stuff.

3:30

It is Kubernetes continuing to act like a platform

3:33

that wants fewer half -legacy foot guns hanging

3:37

around forever. And honestly, that is what maturity

3:40

often looks like. Not more knobs, fewer weird

3:43

things everybody knows are sketchy, but keeps

3:46

tolerating anyway. And there's another angle

3:49

here that makes this release feel more grown

3:51

up than flashy. Kubernetes 1 .36 is also pushing

3:55

more of the supply chain and packaging story

3:58

towards cleaner primitives. The release highlights

4:01

support for packaging read -only application

4:04

data. models, and static assets as OCI artifacts

4:08

and delivering them to pods through the same

4:11

registries and versioning workflows teams already

4:15

use for container images. It also calls out staleness

4:18

migration work for controllers, which matters

4:21

because a lot of weird controller behavior does

4:24

not show up as a dramatic crash. It shows up

4:27

as a controller acting on stale assumptions at

4:30

the wrong time and doing the wrong thing very

4:33

confidently. So the practical read for me is

4:36

this. If you run Kubernetes at any real scale,

4:39

1 .36 is the kind of release where you should

4:42

not just skim the release nodes for what's new.

4:45

You should skim them for what old thing are they

4:48

finally done tolerating? And what newer path

4:51

is now stable enough that we should stop calling

4:54

it experimental in our own heads? Because that's

4:57

usually where the real platform signal is. Story

5:04

2, Gateway API version 1 .5, is the networking

5:08

future getting more real. Next up, Gateway API.

5:11

SIG Network announced Gateway API version 1 .5

5:15

on April 21st, called it the biggest release

5:18

yet, and said the focus was moving existing experimental

5:21

features into the standard, meaning stable, channel.

5:25

The release promotes six features that people

5:27

actually care about. Listener set, TLS route,

5:31

HTTP route, CORS Filter, Client Certificate

5:35

Validation, Certificate Selection for Gateway

5:37

TLS Origination, and Reference Grant. They also

5:41

moved to a release train model, which basically

5:44

means features ship when they are ready at freeze

5:47

time instead of waiting for some perfect bundle.

5:50

That matters because Gateway API is no longer

5:53

just a nice future -facing idea for people who

5:56

like cleaner abstractions. It keeps becoming

5:58

the actual road forward. Especially now that

6:01

Kubernetes itself is pointing people towards

6:04

gateway API in places where older patterns are

6:07

being deprecated. And especially after all of

6:10

the broader ingress and controller retirement

6:12

pressure we've already been seeing this year.

6:15

So to me, the real read here is simple. The networking

6:18

control plane in Kubernetes keeps getting more

6:22

explicit, more standardized, and less willing

6:25

to leave everything. in the controller specific

6:27

magic plus annotations bucket forever. And some

6:31

of the specific gateway API promotions are worth

6:34

slowing down on for a second. Listener set is

6:37

interesting because it gives teams a cleaner

6:39

way to contribute listeners to a gateway without

6:43

forcing everything into one giant resource owned

6:46

by one team. TLS route going stable matters because

6:50

it makes the TLS pass through and terminate use

6:53

cases feel more first class. The CORS filter

6:56

moving into the standard channel is also one

6:59

of those small looking things that matters a

7:02

lot in real life because it is exactly the kind

7:05

of behavior people used to bury in controller

7:08

specific config or app side workarounds. And

7:11

the move to a release train model is its own

7:14

kind of maturity signal too. It means the project

7:16

is optimizing less for perfect bundling and more

7:20

for predictable forward motion. So if I'm a platform

7:23

team, the takeaway is not just cool, more gateway

7:26

API stuff. It is probably how much of our ingress

7:30

and edge behavior is still trapped in annotations.

7:33

How much of it is controller specific and how

7:35

much of it now has a cleaner upstream shaped

7:38

home we should actually plan forward. Story three,

7:45

AWS Copilot is reaching end of support. And

7:49

that tells you a lot. Now to AWS. AWS says Copilot

7:53

CLI reaches end of support on June 12th, 2026.

7:58

It will remain available as an open source project

8:01

on GitHub. But AWS says it will no longer receive

8:04

new features or security updates from AWS. In

8:08

the same announcement, AWS points users towards

8:11

Amazon ECS Express Mode and AWS CDK Layer 3 constructs

8:16

as the migration paths they want people evaluating.

8:19

This is one of those stories I like because it

8:21

is not really about the tool alone. It is about

8:24

the platform preference. Copilot was AWS's opinionated

8:28

developer -friendly path for developing containerized

8:31

apps on ECS and AppRunner. Now AWS is pretty

8:35

clearly saying the newer opinionated paths are

8:39

somewhere else. That does not mean Copilot users

8:41

did anything wrong. It just means the center

8:44

of gravity moved. And that is a very real cloud

8:47

platform lesson. Sometimes the easy path you

8:50

picked was genuinely the right call at the time.

8:53

Then the provider evolves. The preferred abstractions

8:56

change. And now your job is not debating whether

8:59

the shift is fair. Your job is planning the migration

9:02

before the old path becomes operational debt

9:06

with a calendar attached to it. And there is a

9:09

migration planning lesson here that I think gets

9:12

missed when people hear end of support. Copilot

9:15

did a lot more than just offer a nicer CLI. AWS

9:19

says it used CloudFormation stacks for the app

9:22

and service layers, which means a lot of teams

9:24

probably have more Copilot -shaped infrastructure

9:27

under the hood than they remember. So the right

9:31

response here is not panic. It is inventory.

9:34

What workloads are still on Copilot? Which ones

9:37

are app runner versus ECS? Which parts of the

9:41

deployment flow are tied to Copilot convention?

9:44

And whether the real destination should be express

9:47

mode for simplicity or CDK L3 constructs for

9:51

teams that want stronger IAC controls and customization.

9:55

AWS is pretty explicit. that Express Mode is

9:59

meant to preserve a lot of the simplicity that

10:02

made Copilot attractive, while CDK L3 is the

10:06

more customizable path. That is the kind of thing

10:08

I would actually say out loud to a team. Do not

10:11

turn this into a philosophical debate about whether

10:14

Copilot was good. Assume it was good for when

10:17

you picked it. Now ask, what will be annoying

10:20

to unwind later if you ignore the date now? Story

10:27

4. Airbnb says alert pain was a workflow problem,

10:31

not a culture problem. This one is probably my

10:34

favorite in the whole episode. Airbnb says the

10:37

issue with alert development was not that engineers

10:41

did not care. It was that their observability

10:43

as code workflow had a blind spot. Code review

10:47

could validate syntax and logic, but not actual

10:50

alert behavior against real -world data. So production

10:54

kept becoming the proving ground. Airbnb says

10:57

they built fast feedback loops to preview, validate,

11:01

and surface alert behavior before PR submission,

11:05

cut development cycles from weeks to minutes,

11:07

and used the workflow to help migrate 300 ,000

11:11

alerts from a vendor to Prometheus. They also

11:14

say what used to take a month of iteration can

11:17

now take an afternoon. This is such a good SRE

11:20

story. Because it is very easy to look at noisy

11:24

alerts, weak alerts, or slow alert iteration

11:26

and say, this is just a culture problem, or people

11:30

just need to care more. Sometimes, sure. But

11:33

a lot of the time, the workflow is just bad.

11:35

If the only way to see whether an alert behaves

11:38

correctly is to merge it and wait, then you built

11:41

a system where production is the testing harness

11:44

and on -call is the feedback loop. That is not

11:47

a motivation issue. That is a tooling issue.

11:50

And Airbnb's fix is basically the kind of thing

11:53

platform teams should love. Earlier feedback,

11:56

more confidence inside the PR, less wasted iteration

12:00

after the fact. And Airbnb made another design

12:03

choice here that I really liked. They explicitly

12:06

chose compatibility over novelty. Instead of

12:09

inventing some exotic, proprietary, alert analyst

12:13

model, they took Prometheus role groups as the

12:17

input. used Prometheus's own rule evaluation

12:19

engine, and wrote the results back out as Prometheus

12:23

time series blocks, exposed through the standard

12:26

query API. That is a really smart platform move

12:29

because it means the preview and analysis system

12:32

fits into the workflows engineers already understand.

12:36

Instead of becoming one more internal snowflake,

12:39

everybody has to relearn. That matters because

12:42

a lot of internal platform tooling dies, not

12:45

because the idea was bad. but because the workflow

12:47

becomes learn our special thing first. Airbnb's

12:51

version sounds more like use the standards, use

12:54

the real engine, show people the delta before

12:56

they merge, and make the right thing easier than

12:59

the lazy thing. That is honestly a great pattern

13:02

well beyond alerting. Story five. Cloudflare

13:09

is saying non -human identity is now the real

13:13

identity story. Last main story. Last time in

13:16

episode 34, Cloudflare was talking about the

13:19

network fabric for agents. This time, they're

13:22

talking about the identity, token, and permission

13:25

model around them. Cloudflare's framing here

13:27

is very direct. Identities are not just people

13:30

anymore. They are agents, scripts, and third -party

13:33

tools acting on your behalf. Their update packages

13:37

that into three practical areas. scannable API

13:40

tokens, better OAuth visibility and revocation,

13:43

and more granular resource -scoped RBAC. Cloudflare

13:47

says new tokens are easier for scanners to recognize.

13:50

Customers now get a central connected applications

13:53

experience for OAuth access and revocation. And

13:57

resource -scoped permissions are available for

14:00

more resources so both users and agents can be

14:03

right -sized more tightly. They also say these

14:06

scopes can be assigned through the dashboard,

14:09

the API, or Terraform. And I think that this

14:12

story matters because it is one of the clearer

14:15

examples of the industry dropping the pretense.

14:18

An agent with a token is not some cute helper.

14:21

It is an identity with power. A script with standing

14:24

access is not background noise. It is an identity

14:28

with power. A third -party OAuth app is not just

14:32

a convenience. It is an identity with power.

14:35

And once you accept that, the rest of the story

14:38

gets more normal. Token scanning, connected app

14:41

visibility, permission scoping, leased privilege.

14:45

This is just IAM growing up around modern workloads

14:48

and agent -heavy environments. And I also like

14:51

that Cloudflare is not treating this as some

14:54

abstract future of security thing. The token

14:57

changes are practical. They added a recognizable

15:00

prefix and checksum so scanners can identify

15:04

Cloudflare tokens with much higher confidence.

15:08

The OAuth work is practical too. You can review

15:11

the app name. publisher, requested scopes, and

15:14

which accounts the app is asking to access before

15:18

you approve it, and then see those connected

15:20

applications later in one place to revoke them

15:24

if needed. And their resource scoped permissions

15:27

framing is probably the most useful mental model

15:30

in the whole post. The token gets you in the

15:32

building. But scopes should decide which rooms

15:35

you can enter. That is the part I think teams

15:37

should take seriously. If you are letting agents,

15:40

scripts, and third -party automations pile up

15:43

with broadstanding access, you do not really

15:46

have an AI governance problem. You have an IAM

15:49

hygiene problem that got new branding. Okay,

15:59

a few quick ones before we wrap. Microsoft shipped

16:02

April patches for Azure DevOps Server and says

16:06

they strongly recommend staying on the latest

16:09

secure version. The patch fixes a null reference

16:12

issue that could break pull request completion

16:15

during work item auto -completion, improves sign

16:18

-out validation to prevent potential malicious

16:21

redirects, and fixes PAT connection creation

16:24

for GitHub Enterprise Server. That is a very

16:28

practical PatchNow item. Google also pushed more

16:31

on OTLP metrics for cloud monitoring. Google

16:35

says you can send metrics to cloud monitoring

16:37

through a provider -agnostic OpenTelemetry pipeline,

16:41

store that data in the same format as managed

16:44

service for Prometheus, and query it through

16:47

the same cloud monitoring interfaces. That is

16:49

a nice observability standard story because it

16:52

is not just support the protocol. It is make

16:55

the protocol path actually first class. I think

17:06

the human thread underneath this week's episode

17:08

is that a lot of engineering pain comes from

17:11

waiting too long to make responsibilities explicit.

17:14

Kubernetes is making some of that explicit by

17:17

deprecating or removing paths it clearly does

17:20

not want to keep carrying forever. Gateway API

17:23

is making networking intent more explicit. AWS

17:27

is making platform preference more explicit by

17:30

telling people where Copilot stops and where

17:33

the next preferred paths begin. Airbnb is making

17:36

alert quality less dependent on vague craftsmanship

17:40

and more dependent on visible feedback before

17:43

merge. And Cloudflare is making it harder to

17:46

pretend that agents and scripts are somehow outside

17:49

the normal identity and access conversation.

17:52

And this is where I think platform work gets

17:54

misunderstood sometimes. People talk about maturity

17:57

like it means more automation, more abstraction,

18:00

more paved roads. Sometimes it does. But just

18:03

as often, maturity is really about being honest

18:06

sooner. Honest about which patterns are legacy.

18:09

Honest about which workflows are broken. Honest

18:12

about which tools are losing support. Honest

18:15

about whether production is secretly your only

18:17

validation environment. Honest about who or what

18:21

actually has access in your environment. That

18:24

is not as fun as a big shiny launch. But it is

18:27

usually where a lot of the real risk reduction

18:30

comes from. Because the longer a team stays fuzzy

18:33

on ownership, the more that fuzziness turns into

18:37

toil. And the more that toil turns into staffing

18:40

pain. And the more that that staffing pain turns

18:43

into reliability pain. Not because anybody is

18:46

lazy. Not because people do not care. Usually

18:49

just because too many systems stayed ambiguous

18:52

for too long. So yeah, that's probably my biggest

18:57

takeaway from this week. Better platforms do

18:59

not just make things easier. They make certain

19:02

kinds of vagueness harder to sustain. And honestly,

19:05

that is usually a good thing. All right. That's

19:08

it for this episode of Ship It Weekly. Quick

19:11

recap. Kubernetes 1 .36 and why it feels like

19:15

a maturity release. Gateway API version 1 .5

19:18

moving more core networking features into stable

19:21

territory. AWS Copilot reaching end of support

19:25

and what that says about shifting preferred paths.

19:28

Airbnb proving alert pain was a workflow gap.

19:32

not just a cultural issue. And Cloudflare making

19:35

the case that non -human identity is now a core

19:38

security story. Then in the lightning round,

19:41

Azure DevOps server patches and Google Cloud

19:44

OTLP metric support. Links and show notes are

19:48

on shipitweekly .fm. You can also find video

19:51

versions on YouTube. If this episode was useful,

19:54

follow or subscribe wherever you listen and send

19:57

it to the person on your team. who keeps having

19:59

to explain that reliability problems are usually

20:02

workflow problems long before they became on

20:05

-call problems. I'm Brian, and I'll see you next

20:08

week.

![]() AWS Interconnect GA, Cloudflare Mesh, GitLab 19, EKS Auto Mode, and OpenTelemetry ConfigEpisode: AWS Interconnect GA, Cloudflare Mesh, GitLab 19, EKS Auto Mode, and OpenTelemetry Config

AWS Interconnect GA, Cloudflare Mesh, GitLab 19, EKS Auto Mode, and OpenTelemetry ConfigEpisode: AWS Interconnect GA, Cloudflare Mesh, GitLab 19, EKS Auto Mode, and OpenTelemetry Config