0:00

Developer tooling used to feel like the safe

0:02

part of the system. Production was the scary

0:05

place. The database, the cluster, the load balancer,

0:08

the pager. And then everything around that was

0:11

just tooling. GitHub, CICD, merge queues, package

0:16

publishing, code review bots, AI agents. But

0:20

that line is getting harder to defend. Because

0:22

when the tooling can merge code, publish artifacts,

0:26

leak secrets, spend money, or decide what gets

0:29

shipped, it is not just sitting beside production

0:31

anymore. It is part of production. And this week,

0:34

that idea showed up everywhere. Hey, I'm Brian

0:54

Teller. I work in DevOps and SRE, and I run Teller's

0:57

Tech. This is Ship It Weekly, where I filter

0:59

the noise and focus on what actually changes

1:02

how we run infrastructure and own reliability.

1:04

Show notes and links are on shipitweekly .fm.

1:08

If this show's been useful, follow it wherever

1:10

you listen. Ratings help way more than they should.

1:12

And if you are watching on YouTube, subscribe

1:14

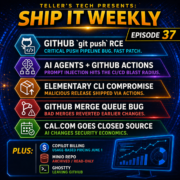

there too. We have six main stories today, then

1:18

the lightning round, and we'll wrap with the

1:20

human closer. We're starting with GitHub's critical

1:22

Git Push remote code execution vulnerability,

1:26

including the AI reverse engineering angle from

1:29

Wiz. Then we'll talk about prompt injection against

1:32

AI agents tied into GitHub workflows. After that,

1:36

Elementary's CLI supply chain incident, where

1:39

a GitHub actions workflow became the path to

1:42

a malicious release. Then GitHub's April 23rd

1:45

Merge Queue regression. Then cal .com going closed

1:48

source, which is probably the most argument -starting

1:52

story in this set. And finally, GitHub Copilot

1:55

moving to usage -based billing because AI tooling

1:58

is becoming a governance and cost management

2:00

problem, not just a productivity experiment.

2:04

Then in the lightning, we'll hit MinIO, Ghostty,

2:07

Docker -hardened images, and Azure DevOps security

2:10

updates. Story one. GitHub's GitPush RCE is a

2:18

toolchain wake -up call. Let's start with GitHub.

2:21

GitHub disclosed a remote code execution vulnerability

2:24

in the GitPush pipeline. The issue affected GitHub

2:28

.com, GitHub Enterprise Cloud, GitHub Enterprise

2:31

Cloud with Data Residency, Enterprise Managed

2:34

Users, and GitHub Enterprise Server. GitHub says

2:38

Wiz reported the bug through the bug bounty program

2:40

on March 4th. GitHub reproduced it internally

2:43

within about 40 minutes, fixed GitHub .com in

2:47

under two hours, and says it found no evidence

2:49

of exploitation. That is the reassuring part.

2:52

The uncomfortable part is the shape of the bug.

2:55

The vulnerability involved user -supplied Git

2:57

push options. Those push options ended up influencing

3:01

internal metadata passed between GitHub services.

3:05

Because of how that metadata was formatted, an

3:07

attacker could inject extra fields that a downstream

3:10

service treated as trusted internal data. And

3:14

once user input can shape trusted internal metadata,

3:17

things get ugly fast. GitHub says the researchers

3:20

showed they could override the environment where

3:23

the push was processed, bypassing sandboxing

3:26

around hook execution, and ultimately execute

3:29

arbitrary commands on the server handling the

3:31

push. That is a lot hiding behind the phrase

3:34

Git push. And that is why this belongs in a DevOps

3:37

and SRE conversation, not just a security newsletter.

3:41

Every team treats Git as normal plumbing. Humans

3:44

push code. Bots push code. Automation responds

3:47

to pushes. CI kicks off. Release workflows start.

3:51

Security scanners run. Deployment paths wake

3:54

up. But behind that one command is a hosting

3:57

platform. Internal service boundaries. Hook execution.

4:00

Sandboxing. Audit logs. And identity. That is

4:04

infrastructure. For GitHub .com and enterprise

4:06

cloud users, GitHub says the platform was patched

4:10

and no customer action is needed. For GitHub

4:12

enterprise server customers, this is a patch

4:15

now situation. GitHub specifically recommends

4:17

upgrading supported G -H -E -S releases and reviewing

4:22

audit logs for suspicious push operations. But

4:25

the bigger story may be how Wiz found it. Dark

4:27

reading covered the AI -assisted reverse engineering

4:30

side. Wiz used AI tooling to help analyze GitHub

4:34

Enterprise Server, understand compiled binaries

4:37

and internal service behavior, and find a bug

4:39

that historically may have taken weeks or months

4:42

to uncover. They reportedly went from the idea

4:45

to working exploit in under 48 hours. That is

4:49

the part that should stick with us. Not because

4:51

AI found a bug, like the robot woke up and became

4:54

a hacker. The real story is that AI lowered the

4:56

cost of understanding complex systems. A lot

5:00

of software has been protected informally by

5:02

being annoying. Hard to reverse engineer. Hard

5:05

to understand. Too much glue. Too many binaries.

5:08

Too many internal assumptions. That was never

5:11

real security, but it was friction. And friction

5:14

matters. If AI removes enough of that friction,

5:17

then systems that were probably fine because

5:20

nobody will spend the time become more interesting

5:23

targets. So the takeaway is not panic. It is

5:27

that boring security engineering matters more

5:29

when discovery gets faster. Input validation.

5:32

Trust boundaries. Least privilege. Patch velocity.

5:36

Audibility. Because when the cost of finding

5:39

bugs drop, the cost of sloppy assumptions go

5:42

up. Story 2. Prompt injection is now in the CI

5:50

-CD blast radius. Next up, AI agents and GitHub

5:54

actions. The register covered research showing

5:56

prompt injection attacks against AI agents from

6:00

Anthropic, Google, and Microsoft tied to GitHub

6:03

workflows. The basic shape is pretty simple.

6:06

AI agents read GitHub data, pull request titles,

6:10

issue bodies, issue comments, review comments,

6:13

markdown, the stuff developers and contributors

6:16

write all day. So if an attacker can place malicious

6:19

instructions into that data and an agent later

6:22

processes it with access to tools or secrets,

6:25

the attacker may be able to steer the agent.

6:28

In the Claude case, researchers showed that a

6:31

malicious PR title could influence the bot and

6:34

leak credentials into a review comment. With

6:37

Gemini, issue comments and prompt injection were

6:41

used to expose an API key. With GitHub Copilot

6:44

Agent, malicious instructions could be hidden

6:47

in an HTML comment that humans would not see

6:50

in rendered markdown. The victim assigns the

6:53

issue to Copilot, but the payload is already

6:55

sitting there in the context. The researchers

6:58

demonstrated theft of Anthropic and Gemini API

7:01

keys, multiple GitHub tokens, and potentially

7:04

any secret exposed to the GitHub Actions Runner

7:07

environment. That last phrase is the one I care

7:10

about. The GitHub Actions Runner environment.

7:13

Because this is not just an AI story. This is

7:16

a CICD story. If your agent runs in GitHub Actions,

7:20

and GitHub Actions has access to tokens, deployment

7:24

credentials, package publishing rights, cloud

7:27

permissions, Slack webhooks, or JIRA credentials,

7:30

then prompt injection becomes part of that blast

7:34

radius. The researcher called this comment and

7:37

control, which is a play on command and control.

7:40

And it fits. The attacker may not need malware.

7:44

They may not need an external callback. They

7:47

may not need to trick a human into clicking anything.

7:50

They can write data into GitHub, let automation

7:52

read it, and let the automation leak the secret

7:55

back into GitHub. That is a nasty little loop.

7:59

And this is where I think teams are a little

8:01

too cautious about agents. A code review bot

8:03

should not have more access than it needs. An

8:06

issue summarizer should not have repository write

8:09

access. A documentation bot does not need cloud

8:12

keys. And if you are passing untrusted GitHub

8:15

content into an agent that can use tools, run

8:19

commands, or read secrets, that is not a harmless

8:22

productivity flow. That is untrusted input hitting

8:25

an execution environment. We have had that category

8:28

of problem for decades. AI did not invent it.

8:31

AI just made the parser weird. Story 3. Elementary's

8:39

CLI incident is the supply chain story in miniature.

8:43

Now let's talk about elementary. Elementary Data

8:46

published a security incident report for a malicious

8:49

release of the elementary open source Python

8:51

CLI version 0 .23 .3. On April 24th, a malicious

8:57

CLI package was published to PyPI, and a malicious

9:01

Docker image was published to their registry.

9:03

Those artifacts were not produced by the elementary

9:06

team. According to elementary, the attacker opened

9:09

a PR with malicious code and exploited a script

9:13

injection vulnerability in one of their GitHub

9:15

actions workflows to publish the release. That

9:19

is the modern supply chain anxiety loop in one

9:21

story. A pull request. A workflow vulnerability.

9:25

a release -looking artifact, PyPI, Docker, and

9:29

downstream users who now have to assume any credentials

9:32

accessible to the environment where that CLI

9:35

ran may have been exposed. Elementary removed

9:38

the malicious release, published a safe 0 .23

9:42

.4, removed the vulnerable workflow, rotated

9:45

affected credentials, and moved the OIDC authentication

9:49

where possible. That response sounds pretty solid,

9:52

but the attack path is the real lesson. A lot

9:55

of teams still think of GitHub Actions as a convenience

9:58

layer. But for many projects, GitHub Actions

10:01

is the release authority. It builds containers.

10:04

It publishes packages. It signs things. It pushes

10:07

to registries. It decides what gets shipped.

10:10

So if that workflow has script injection, broad

10:14

permissions, long -lived tokens, or too much

10:16

trust in PR -controlled input, then your release

10:20

system can become the attack path. The question

10:23

is not only do you trust your source code? It

10:26

is also do you trust the automation that turns

10:29

source code into artifacts? Those are not the

10:32

same question anymore. You can have a clean main

10:34

branch and a vulnerable release workflow. You

10:37

can have signed releases and a compromised signing

10:40

path. You can rotate app credentials and still

10:43

forget that the package publisher token has been

10:46

sitting in CI forever. So the practical playbook

10:49

is boring but important. Check workflow permissions.

10:52

Avoid long -lived publishing tokens where OIDC

10:56

is available. Be careful with shell interpolation

10:59

from PR titles, branch names, issue comments,

11:02

tags, and commit messages. Split, build, and

11:06

publish. Protect release environments. And threat

11:09

model GitHub Actions like production. Because

11:12

if GitHub Actions can publish your package, it

11:15

is production. It might not serve customer traffic,

11:18

but it controls customer trust. Story four, GitHub's

11:25

merge queue regression is a control plane reliability

11:28

story. Next one is also GitHub, but from a reliability

11:32

angle. On April 23rd, GitHub had a merge queue

11:36

regression. GitHub says whole requests merged

11:39

through merge queue using squash merge produced

11:42

incorrect merge commits when a merge group contained

11:45

more than one pull request. In some affected

11:48

cases, changes from previously merged PRs and

11:52

prior commits were accidentally reverted by later

11:55

merges. GitHub says that 658 repositories and

12:00

2 ,092 pull requests were affected. No data was

12:04

lost because the commits still existed in Git.

12:07

But affected default branches ended up in an

12:10

incorrect state. And GitHub could not safely

12:13

repair every repository automatically. That is

12:16

a painful incident. And it is painful because

12:18

merge queues exist to reduce risk. That is the

12:22

whole point. They are supposed to make high -change

12:24

environments safer. Instead of everyone racing

12:27

to merge against a moving base branch, the queue

12:29

group changes, validates them, and lands them

12:32

in a more orderly way. So when that control plane

12:35

gets a bad behavior, it creates a weird kind

12:39

of incident. Not the site is down. Not the database

12:42

is corrupted. But the mechanism we trust to preserve

12:45

correctness just produced a branched state that

12:48

is not what we think it is. That is subtle. And

12:51

subtle is dangerous. Because if a deploy fails

12:53

loudly, you know. If an API goes red, you know.

12:57

If a merge queue quietly reverts earlier changes,

13:00

you might not notice until the wrong code is

13:03

already in production. The practical takeaway

13:05

is not don't use merge queues. I still like merge

13:08

queues. The takeaway is that any environment

13:11

with merge authority needs SRE thinking. Good

13:14

audit trails. Easy ways to identify what changed.

13:18

Detection when a branch state does something

13:21

weird. Clear ownership when the thing that protects

13:24

main becomes the thing that damages main. Merge

13:27

queues, feature flag systems, CI schedulers,

13:31

artifact registries, deployment orchestrators,

13:34

GitOps controllers. These are control planes.

13:37

They may not be in the request path, but they

13:40

shape what production becomes. Story five. Cal

13:47

.com going closed source is the argument everyone

13:51

will want to have. Now for the spicy one. Cal

13:54

.com announced that it is going closed source.

13:57

They said that after five years as open source

14:00

champions, they are moving the production code

14:03

base closed because AI has changed the security

14:06

landscape. Their argument is basically this.

14:09

In the past, exploiting an application required

14:11

skilled humans. time, and patience. Now AI can

14:15

be pointed at an open -source codebase and used

14:18

to systematically scan for vulnerabilities. Cal

14:21

.com says that being open -source is increasingly

14:24

like giving attackers the blueprints to the vault.

14:27

They are keeping an open version available as

14:29

cal .diy under the MIT license. But they say

14:33

that the production Cal .com codebase has diverged,

14:36

including rewrites in areas like authentication

14:39

and data handling. I have mixed feelings on this

14:42

one. On one hand, I understand the concern. This

14:45

week's GitHub and Wiz story gives some support

14:48

to the idea that AI can make vulnerability discovery

14:50

faster and cheaper. If you run a SaaS product

14:53

handling sensitive calendar data, authentication

14:56

flows, integrations, and customer information,

14:59

it is not crazy to worry about AI -assisted vulnerability

15:02

mining. On the other hand, closed source equals

15:05

safer is not a clean argument. Closed source

15:09

software still gets reversed. Closed source software

15:12

still has bugs. Closed source software can lose

15:16

the benefit of external review. And hiding code

15:19

is not the same as securing it. So I would not

15:22

frame this as cal .com is right or cal .com is

15:25

wrong. The better framing is that AI is putting

15:29

real pressure on the business and security model

15:32

of commercial open source. For maintainers, open

15:35

source can mean transparency, community, contributions,

15:39

and trust. For customers, it can mean self -hosting,

15:42

auditability, and less vendor lock -in. For attackers,

15:47

it can mean a searchable map of the systems.

15:49

And for a commercial company, it may mean all

15:52

of those things at once, plus support burden,

15:55

vulnerability reports, hosted customer risk,

15:58

and investor pressure. That tension is not new.

16:02

AI just makes it louder. For platform teams,

16:05

the takeaway is not stop using open source. That

16:07

would be ridiculous. The takeaway is stop treating

16:10

project governance and licensing as background

16:13

noise. When a project changes its source model,

16:16

repo model, release model, or commercial posture,

16:20

that is supply chain signal. You do not have

16:22

to panic, but you should notice. Story six, co

16:30

-pilot usage -based billing makes AI a governance

16:33

problem. Last main story. GitHub Copilot is moving

16:37

to usage -based billing on June 1st. GitHub says

16:40

all Copilot plans will transition to GitHub AI

16:44

credits. Instead of counting premium requests,

16:47

plans will include a monthly credit allotment,

16:50

and usage will be calculated based on token consumption,

16:53

including input, output, and cached tokens. Base

16:57

subscription prices are not changing, according

17:00

to GitHub. Code completions and next edit suggestions

17:03

stay included. But agentic usage, longer sessions,

17:07

heavier models, and multi -step workflows move

17:10

more directly into the cost model. And one detail

17:14

jumped out at me. Copilot code review will also

17:17

consume GitHub Actions minutes in addition to

17:20

GitHub AI credits. That is what makes this a

17:23

platform engineering story. Because now AI usage

17:26

is not just a seat cost. It is tokens, model

17:29

choice, cached context, review automation. actions

17:33

minutes, budget controls, and admin visibility.

17:37

And this is the normal cloud story happening

17:39

again. First, it feels magical. Then everyone

17:42

adopts it. Then the bill shows up. Then leadership

17:45

asks, why is it growing? Then teams discover

17:48

they do not have tagging, budgets, ownership,

17:51

quotas, or usage visibility. Then platform teams

17:54

get asked to govern it after it is already everywhere.

17:58

We saw this with cloud. We saw it with observability.

18:01

We saw it with CI minutes. Now we are going to

18:03

see it with AI -assisted development. I am not

18:06

anti -copilot. I use AI tools constantly. But

18:10

the economics are changing. A quick autocomplete

18:12

suggestion and a multi -hour agentic coding session

18:15

are not the same cost profile. A lightweight

18:18

code explanation and a repo -wide refactor are

18:22

not the same cost profile. A human asking a few

18:25

questions and an agent iterating across a codebase

18:28

are not the same cost profile. GitHub is saying

18:31

that more directly now. And teams should probably

18:34

take the hint. If AI behaves like cloud spend,

18:37

you need cloud spend habits. Visibility. Ownership.

18:41

Guardrails. Budgets. And somebody who notices

18:44

before the bill becomes the incident. A few quick

18:53

ones before we wrap. MinIO's main GitHub repo

18:57

was archived by the owner on April 25th and is

19:00

now read -only. The readme says the repository

19:03

is no longer maintained and points users towards

19:06

other options. It also says that the community

19:08

edition is now source -only and that legacy pre

19:12

-compiled binaries will not receive updates.

19:15

That is a big dependency signal if you have MinIO

19:19

in your stack. Ghostty is leaving GitHub. Mitchell

19:23

Hashimoto wrote that Ghostty plans to move off

19:26

of GitHub while keeping a read -only mirror at

19:29

the current URL. The interesting part is his

19:32

framing. He says the problem is not Git itself.

19:35

The problem is the surrounding infrastructure

19:37

that people rely on. Issues, pull requests, actions,

19:41

and platform workflows. Git may be distributed,

19:44

but your actual engineering workflow usually

19:46

is not. Docker published a one -year reflection

19:49

on Docker -hardened images. They say Docker -hardened

19:53

images crossed 500 ,000 daily pulls with more

19:57

than 2 ,000 hardened images, MCP servers, Helm

20:01

charts, and ELS images. That is a nice counterweight

20:04

to the rest of the episode. Not every supply

20:07

chain story is a compromise. Some of the work

20:10

is making safer defaults easier to adopt. Azure

20:13

DevOps added one -click CodeQL setup and org

20:17

-wide alert triage to advance security. Microsoft

20:20

says teams can enable code scanning at the repo,

20:24

project, or organization level without manually

20:27

configuring a pipeline. And security admins get

20:31

a combined alerts view across repositories. Not

20:34

super flashy, but useful when security has to

20:37

scale beyond fixing repo number 417 by hand.

20:48

I think the human thread this week is that engineers

20:51

are still emotionally treating developer tooling

20:53

like it lives outside the blast radius. And it

20:57

does not. The build system is not outside the

20:59

blast radius. The merge queue is not outside

21:02

the blast radius. The package publisher is not

21:05

outside the blast radius. The AI review bot is

21:08

not outside the blast radius. GitHub Actions

21:11

is not outside the blast radius. Copilot billing

21:14

is not outside the governance conversation. The

21:17

open source project that you depend on is not

21:19

outside your supply chain just because you starred

21:22

the repo five years ago. All of this is part

21:25

of production now. Maybe not production in the

21:27

narrow sense, maybe not serving customer traffic

21:30

at this exact second, but production in the operational

21:33

sense. If this breaks, we cannot ship. If this

21:36

is compromised, customers may be affected. If

21:38

this gets expensive, leadership will care. If

21:41

this silently does the wrong thing, production

21:43

may become wrong later. If this changes its licensing

21:46

or maintenance model, our roadmap may get weird.

21:50

That is production. And I think that that shift

21:52

is hard because developer tooling used to feel

21:55

like the comfortable part of engineering. It

21:57

was where we moved fast, where we automated things,

22:00

where we glued things together with tokens and

22:02

YAML and a little bit of we'll clean this up

22:04

later. But the shortcut became the path, and

22:07

the path became the control plane. And the control

22:09

plane now has secrets, merge authority, release

22:13

authority, billing impact, AI behavior, and platform

22:17

dependency risk. That does not mean every team

22:20

needs to panic. It means we need clearer labels.

22:24

A GitHub Actions workflow that publishes to PyPI

22:27

is not just CI. It is a release system. A merge

22:31

queue that lands code on main is not just a productivity

22:34

feature. It is a source control control plane.

22:38

An AI agent with repo access and secrets is not

22:41

just a bot. It is a principal with tools. An

22:45

open source dependency whose repo gets archived

22:48

is not something to look at later. It is a dependency

22:50

management event. And a co -pilot billing change

22:54

is not just pricing. It is a governance signal.

22:57

Once you label the system correctly, you can

22:59

manage it correctly. You can put the right permissions

23:02

around it. You can monitor it. You can budget

23:04

for it. You can patch it. You can separate trusted

23:07

and untrusted input. You can stop pretending

23:10

that the thing with keys to production is somehow

23:13

not production. Reliability is not just keeping

23:16

servers up. It is keeping the paths to production

23:18

trustworthy. And this week, those paths looked

23:21

a little more serious than usual. Alright, that's

23:24

it for this week of Ship It Weekly. Quick recap.

23:27

GitHub patched a critical Git push remote code

23:30

execution vulnerability. And the AI -assisted

23:33

reverse engineering angle may be just as important

23:36

as the bug itself. Researchers showed prompt

23:39

injection attacks against AI agents tied into

23:42

GitHub workflows, which is a pretty clear reminder

23:45

that agents are now in this CI -CD blast radius.

23:49

Elementary Data dealt with a malicious CLI release

23:52

published through a vulnerable GitHub Actions

23:55

workflow. GitHub had a merge queue regression

23:58

that affected more than 600 repositories and

24:01

more than 2 ,000 pull requests. Cal .com is going

24:05

closed source and putting a spotlight on the

24:08

tension between AI, security, open source, and

24:12

business models. And GitHub Copilot is moving

24:15

towards usage -based billing, which means AI

24:18

tooling is becoming a cost and governance conversation,

24:21

not just a productivity conversation. Then in

24:24

the lightning round, we hit MinIO archiving its

24:26

main repository, Ghostty leaving GitHub, Docker

24:30

hardening images crossing 500 ,000 daily pulls,

24:33

and Azure DevOps making CodeQL setup and org

24:37

-wide alert triage easier. Links and show notes

24:40

are on shipitweekly .fm. You can also find the

24:43

video version on YouTube. If this episode was

24:46

useful, send it to the person on your team who

24:48

still says it's just CI. I'm Brian, and I'll

24:51

see you next week.